BehindLogin

How a SaaS platform turned raw competitor signals into structured intelligence, across five major data source categories

BehindLogin needed to know whether a multi-source AI classification layer was buildable before committing to a full product investment. We ran the proof of concept. It worked. Then we built and launched the thing.

Managed Teams

BehindLogin is a UK-based SaaS company building competitor intelligence tools for B2B businesses.

Their platform monitors competitor activity across digital channels and surfaces it to paying subscribers, helping teams stay ahead of market moves without the manual legwork. The engagement was brokered through Deazy.

The data existed. Making sense of it at scale, across five major data source categories, was the hard part.

BehindLogin’s subscribers were already tracking competitors. The volume of signals wasn’t the problem. The problem was intelligibility: social posts, app store updates, website changes, email newsletters, press coverage. Each source behaves differently, requires different handling, and produces data in entirely different shapes. Stitching them into something a non-technical subscriber could actually act on was the real challenge.

Before committing to a full build, BehindLogin needed confidence that the AI classification layer was technically viable and cost-manageable at scale. That’s where the engagement started.

Source heterogeneity

Nine source types, each with its own scraping logic, rate limits, and data shape. LinkedIn caps at ~70 posts per call; X required a custom Selenium + Nitter build to avoid a $5,000/month API bill; email needed secure Google OAuth with encrypted credential storage.

AI cost risk

Classifying signals at volume has real token cost implications. The architecture was designed around this from the start: optimised prompts, token-efficient outputs, and scraping kept strictly separate from analysis.

Architecture extensibility

The system needed to grow into predictive and prescriptive intelligence without a rebuild. That meant building scraping, preprocessing, LLM analysis, and reporting as fully decoupled services from day one.

UX for non-technical users

The output had to be immediately usable by subscribers who think in business terms, not data structures.

Proof of concept first. Full build second. Same team throughout.

No throwaway build. The POC was the foundation the MVP was built on.

We ran two consecutive engagements: a tightly scoped proof of concept, then the full MVP build, with the same team carrying through both. No handover, no context lost.

Phase 1: Proof of Concept

We validated the core AI classification layer against a single data source (a monitored email inbox) before touching the rest. Built on FastAPI, PostgreSQL, and LangChain, with Selenium, BrightData, and Apify handling the scraping layer. The goal was to prove the intelligence engine worked reliably before scaling across all five data source categories. It did. BehindLogin approved the full build.

Phase 2: MVP Build

The team built a full competitor intelligence platform on top of the validated foundation. The MVP stack moved to React and Tailwind on the frontend, Python on the backend, and OpenAI GPT as the LLM, with BrightData and Apify continuing as the scraping backbone across all five categories. CI/CD was set up via GitHub Actions with SSH-based deployment, covering both the customer-facing and staff-facing applications.

Data sources integrated

LinkedIn, Facebook, Instagram, X

Platform-specific API and scraping approaches; rate limiting and auth management per source.

Company websites (multiple)

Change detection across competitor web properties; structured extraction of product and pricing page updates.

Email newsletters

Inbox monitoring and content extraction; full-inbox scanning to catch competitor signals from any sender, not just known newsletters.

Apple App Store, Google Play

Version tracking, update detection, and review monitoring; Play Store version history handled separately.

News websites (multiple)

RSS and direct scraping of relevant industry and competitor press coverage.

Modular scraper design

Each source runs as a decoupled scraping service under a central ScraperManager, handling scheduling, rate-limiting, and backoff strategies independently. New sources slot in without touching the AI or UI layers.

Automated competitor analysis reports

Per-competitor reports covering executive summary, key insights, category distribution, and closing recommendations. All output as JSON for flexible rendering and export.

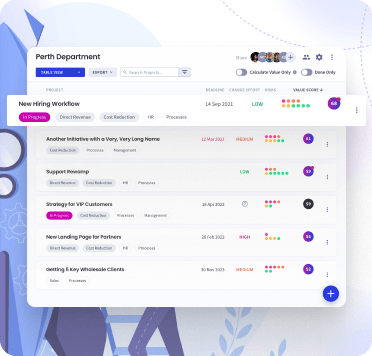

Subscriber dashboard

Configurable subscriber dashboard with grid, list, and carousel views; live filtering by category, source, and competitor; and progressive post expansion from title to AI summary to full text. Includes a staff-facing admin application on the same codebase.

Freemium access model

Freemium access model with sign-up triggers, account management, and competitor-following preferences. New subscribers get AI-assisted competitor recommendations scored against their own company profile.

Data architecture

Data architecture built for scale: tiered hot/cold storage, horizontal partitioning by date range, and background refresh jobs to keep load times low. Historical lookback from 6 months to 2 years.

More services

Fully decoupled services across scraping, preprocessing, LLM analysis, and reporting. Predictive and prescriptive capabilities can be layered in without touching what’s already built.

Signed off. Ready to go live.

The platform has been reviewed and approved by BehindLogin. Both the proof of concept and the full MVP were delivered within scope, with the same team carrying continuity across both engagements. No handover friction, no context lost between phases.

What the build produced is a system that takes competitor activity across five major data source categories and turns it into structured, categorised intelligence, automatically. Feature updates, pricing shifts, recruitment signals, new product moves. All classified, summarised, and surfaced to subscribers without manual processing.

The architecture was also built with the next phase in mind. The modular design means the platform can grow from descriptive intelligence (what competitors are doing now) toward predictive and eventually prescriptive capabilities, without a rebuild. That groundwork is already in place.

The Signal

What started as “can we classify competitor signals from a single email inbox?” became a robust multi-source intelligence platform, signed off and ready for subscribers. The proof of concept model works because the risk is front-loaded. By the time the full build starts, there are no architecture surprises.

POC TECH STACK

API Framework

Database

Scraping

AI Framework

Hosting

MVP TECH STACK

Frontend

Backend

LLM

Scraping

CI/CD

DATA SOURCES

“I will go as far as to say that this is one of, if not THE, best team I have ever worked with.”

“I think it’s the best sprint 1 outcome I have maybe ever seen.”

Other case studies

Tell us what you’re building.

Whether it’s a quick question or a detailed brief — we’d love to hear about it. We’ll respond within one business day with an honest, no-obligation reply.

- No sales pressure or lengthy process

- Response within one business day

- Honest assessment of whether we’re the right fit

- Fixed pricing, no hidden costs

- 10+ years of trusted delivery

No lengthy process.